What kind of “food clock” should Ultima Ratio Regum have?

(A request for feedback)

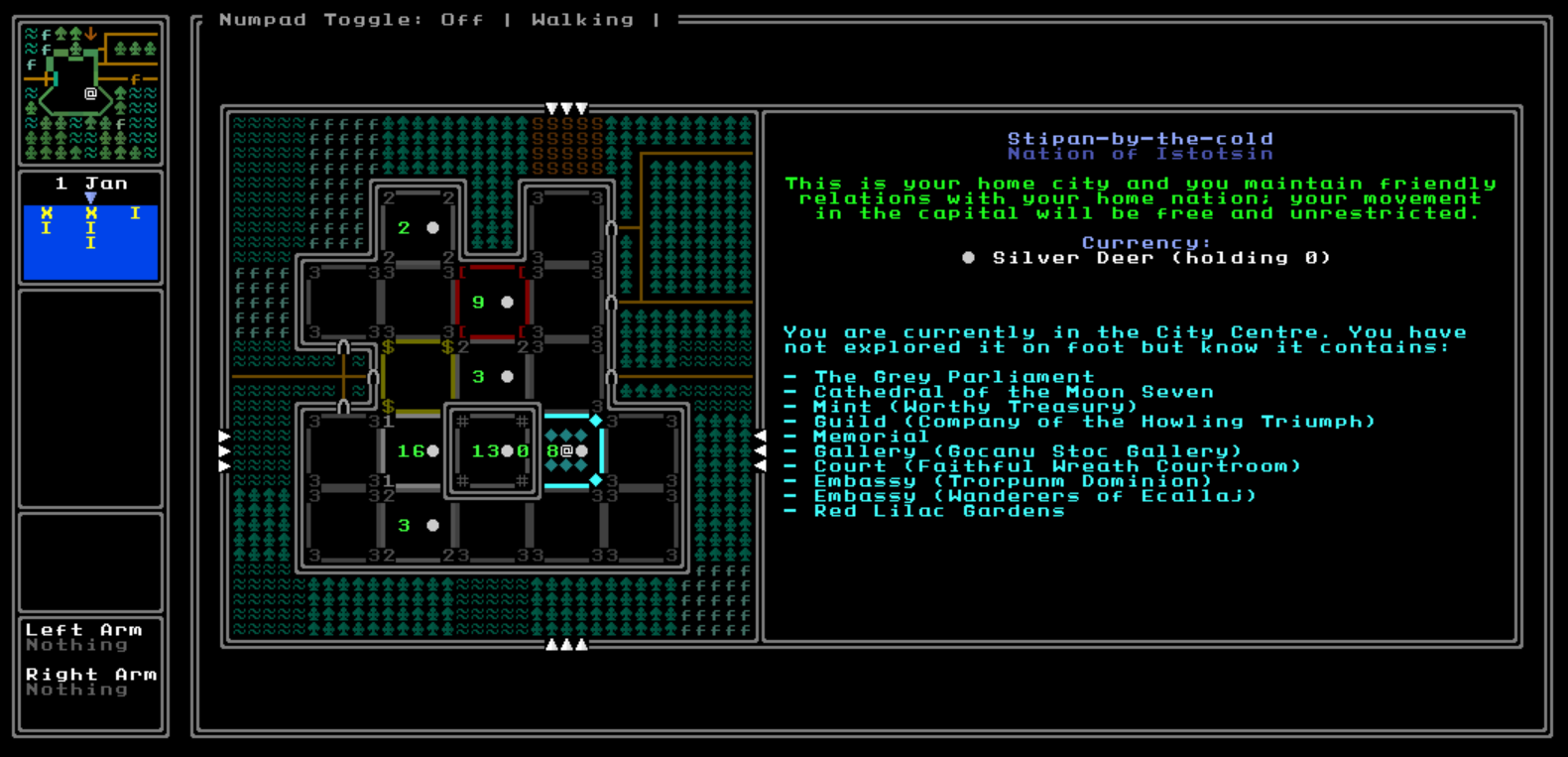

URR is back in active development and zooming towards a 1.0 release, with a 0.9 now well over 70% developed and due to be released at the end of this year. As I come to implement items, objectives, trade and so forth, I realise I now need to figure out what the main “food clock” in the game will be. Given the readership of my blog I shall assume most readers are familiar with this idea, but in case this is new: a “food clock” (or some similar term) is essentially something to keep you moving and stop you grinding in a procedurally-generated game, by having some kind of limited resource, or limited time, which can only be replenished by pushing onwards. In most classic roguelikes this is food – the player needs food to not die, food is limited, the player must advance through the dungeon in order to keep finding food. Some more modern roguelike-y games have alternatives, such as the advancing rebel fleet in FTL, which are more thematically appropriate for the games in question. “Food clocks” or their related brethren therefore have important design concerns in terms of how you want your player behaving and the extent to which the player can grind, as well as design questions about the specific implementation in the thematic or visual elements of a specific game. This is an interesting design topic that has gathered a fair bit of attention already, such as Josh Ge’s insightful piece on the topic here, RogueBasin’s advice about hunger in roguelikes, and indeed Roguelike Radio talked about the topic in great depth many moons ago in this episode. But with URR back in high-speed development and 0.9 approaching release, and replete with items, coins, trade, currencies, and more – some of which might be relevant to a potential food-clock-esque mechanic – the time has now come to figure out what kind of “clock” URR should have.

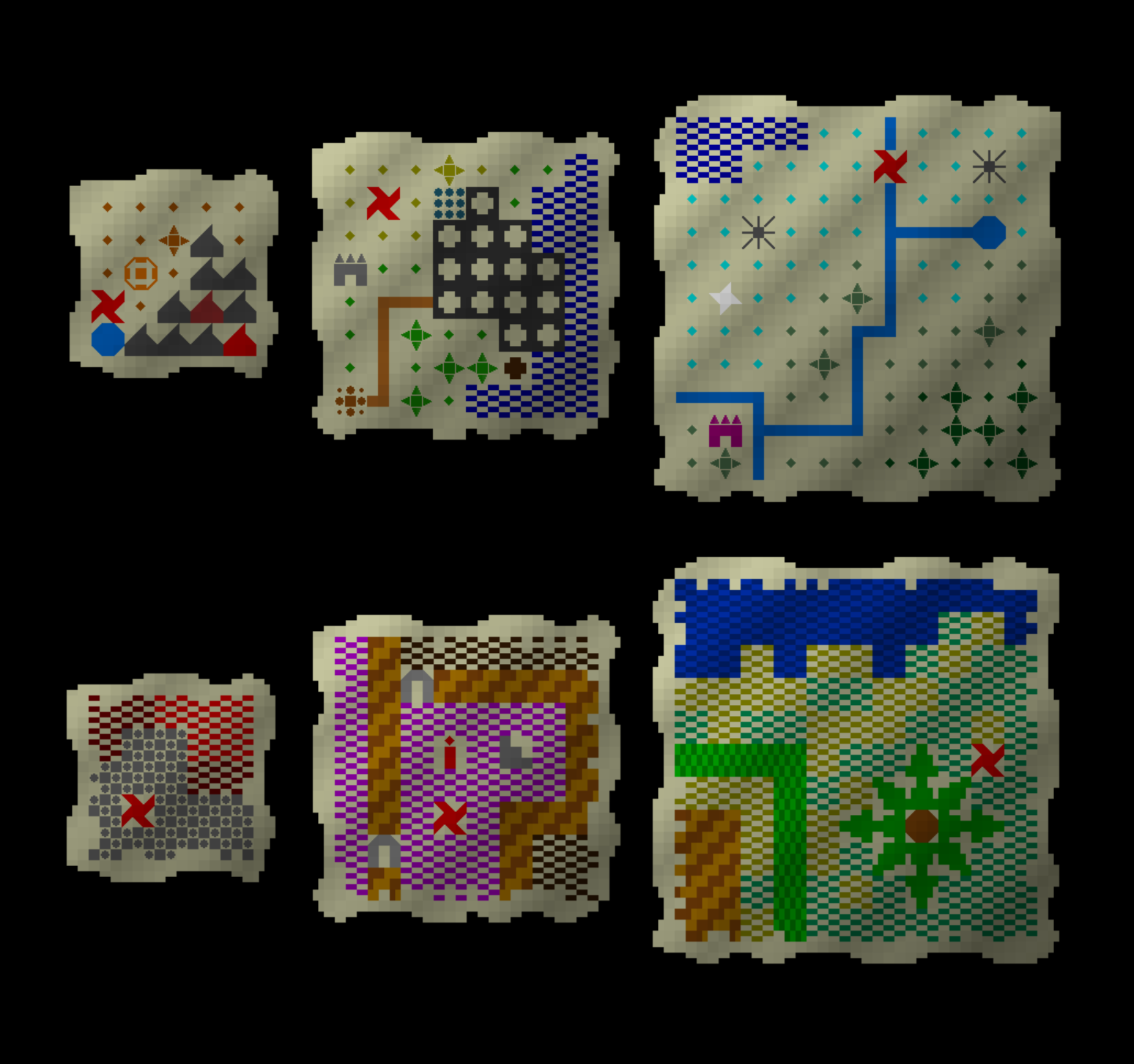

So: as development is in maximum-speed now towards a 1.0 release, let’s first reiterate the game’s objective. Your objective will be to find nine items hidden throughout the world map. The implementation of treasure maps in 0.9 are a part of this, but these items could be buried underground, hidden in homes, underneath buildings, in sewers, in the inventories of NPCs, in tombs, vaults, within secret compartments of university libraries, or basically anywhere else. The core gameplay is to gather information from the game’s procedurally-generated books, buildings, shrines, graves, records, people, languages, lands and more, to find them. This means the standard “explore the dungeon to find food” thing is obviously not going to work: but what are the alternatives? A number come to mind.

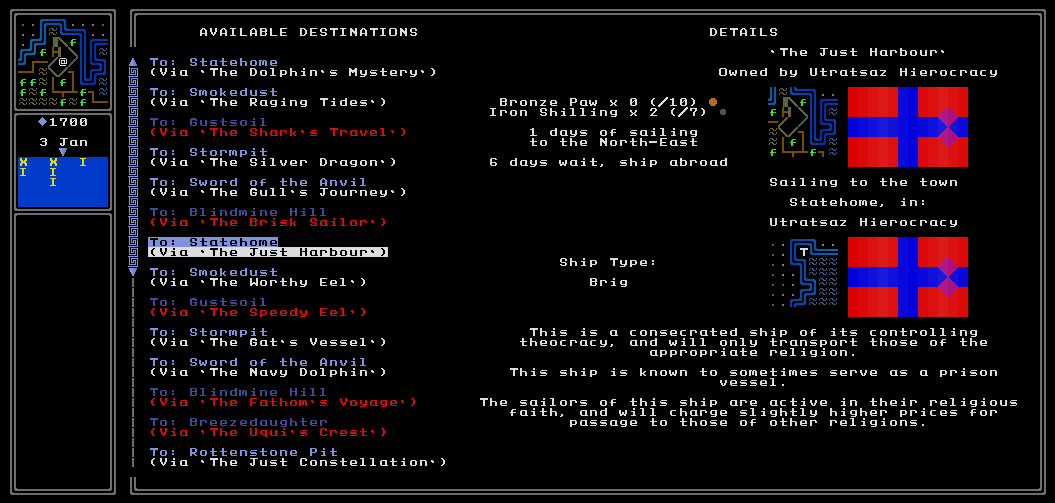

1. The standard roguelike “food clock”. URR (in 0.9 version on my laptop) now has coins, different currencies, and exchange rates, and the ability to buy and sell and trade items around the globe! The first option for the clock, therefore, is to go with the standard food clock. Perhaps the player starts off with a given amount of food which is used up automatically over time, and you starve after too long without food. This could be varied to have different kinds of foods with different benefits / drawbacks; maybe the ability to carry stockpiled water for crossing deserts, for example; particularly long-lasting foods for entering polar regions; and the like. Alternatively we could have a single catch-all item called “supplies” or something which are used up as you move around the world map. The obvious advantages of this are that it’s simple and easy to understand as roguelike clocks go, and would be very easy and quite fun to procedurally-generate, and has opportunities for things like water in deserts which would be interesting and appropriate; on the other hand though I wouldn’t want it to be frustrating to be constantly hunting down places one can acquire food or “supplies”, so we could abstract this out a little (e.g. rather than supplies shops, every time you enter/exit a town or city you can choose to buy some supplies).

2. A fixed length of time. This model would be unusual, but might well fit the core objective of the game. Given the overall thematic idea of hunting down these elements of this Umberto-Eco-esque conspiracy across the globe, perhaps the clock should just be a fixed period of time, such as (say) five in-game years. The game would then end when you die; when you acquire all nine; or the five years runs out, and then maybe you get a range of endings depending on how many of the nine key items you’ve been able to acquire? I think this would be quite bold and interesting, and also quite singular – it reminds me, for example, of the 1001 lives you start with in Aban Hawkins and the 1001 Spikes. I also think this could generate some potentially interesting gameplay strategies later in development based on how one uses one’s time and so forth. The downside, of course, is that given the game’s core quest it would essentially impossible to “make sure” the game is winnable, without giving the game such a long timer clock that there’s no real clock there. A short clock might work sometimes but there would always be the possibility of some unlocking generation which puts all nine key items in really difficult places, or is otherwise unusually tricky to complete. Obviously with balance and playtesting we could find a length of time which makes the game normally winnable with optimal play, but it might be extremely difficult to make sure the game is always winnable with a fixed time clock. The complexity of the game’s world and how the player might navigate it will probably make it impossible to ensure the game is winnable 100% of the time under this model – I think.

3. A length of time extended as you complete objectives. Under this model you would start off with a period of time, e.g. six in-game months, and then every key item you get you gain six months extra to continue your investigations. Then, as above, the game ends when you a) die, b) collect all nine, or c) run out of time. This one seems like it has potential for obvious reasons, although I would of course have to make sure there is, for instance, always at least one key item that is somewhat “accessible” at the game’s start. Although I do like the idea of the tighter time and therefore decision-making pressure this could generate, I also think option 2 (or 1) might in the future yield some interesting gameplay strategies dependent on having longer to conduct your investigations, trade, gather information, etc. Under this model you would also be engaging with the clock from the moment the game starts – whereas the second model would easily allow people to dither around and then panic later on as the clock closes in, which could be an experiential problem – and this would certainly get players thinking hard about where they go and how they go there (walking, carriage, mountain passes, caravans, ships, etc).

4. Simply staying alive. Although at the time of writing one cannot die in URR, the game does not generate all weapons, armour, firearms, bows, and all that other sort of stuff, and I would still like to implement some kind of combat mechanic in the future. At the same time, quite a bit of structure is in the game (even if not presently activated) for things like arresting the player, punishing crimes, and so forth. One option would be to implement a system where your investigations and trading and travel are guaranteed over time to annoy or offend or bother a greater and greater percentage of people, cities, states, and so forth, and thus the food clock is simply remaining alive (or rather, remaining alive / not incarcerated). Much like all the others I think this one has potential – weighing up what you need to do to complete objectives vs who will be upset if, for instance, you dig up the floor of a mansion to find a key item, could be an interesting mechanic to flesh out across the whole game. With that said, though, I think it could also possibly lead to tricky design choices, and I don’t really want to limit the player’s access to the world too much in this particular way – but I think making one’s free survival increasingly challenging as time goes by could be interesting. There’s obviously subsets of this we could consider: do people become less helpful over time? People become less hostile? Civilizations close their borders to you? People actively pursue you in the game world? Or a million other permutations.

5. Something else! Do you have another idea? If so, no matter how “traditional” or radical it might be, please do let me know here in the comments. What might make sense for this kind of game? What other sorts of “clock” could we have to keep the player moving forward and pursuing their objectives? I think these four ideas are fine, but I’m very keen to get outside ideas. When you stare at something for so long, it can become hard to get outside one’s normal thought processes.

Thank you for reading everyone! These are the four ideas I’ve had so far, but I’m not there are many possibilities, and indeed, potentially combinations of the above. I would really like to hear feedback on this, so any thoughts you have, however brief, or however detailed, please do post them in the comments below. I’ll be reading and responding to all of them, and any input will be massively appreciated. This isn’t something I want to have to go back and change later, so I really want to get URR’s “clock” correct the first time around. Thanks so much, folks, and I’m really looking forward to reading your thoughts…!

Your reasoning for 3 (extending time as objectives are completed) makes sense, as a source of constant tension.

Spelunky and Cogmind do this by having a level-specific ‘clock’.

For me personally, atmosphere and thematic integration of mechanics is most important. As such, it could go with factions becoming more hostile over time, an apocalyptic event coming closer, the artifacts themselves deteriorating or disintegrating… Finding something that feels good for YOU would be a nice exercise.

Thanks Dylan! These are great thoughts, I really appreciate them. I do think the “factions increasingly becoming more hostile” is a rather promising and intriguing model. It could even be something grand and ambitious, like EVERY faction (religion, cult, state, individual NPC) becomes *fractionally* more hostile every month, so you’re making strategic choices about who to keep allied with, and everyone else becomes more and more hostile…

A flavor option for 2/3:

Have an opponent who is also after those items. To “win” the game you do not need to gather all of them, you need to get more of them than the enemy. As you go along you can hear vague rumors about where your opponent is and what he is doing which can by itself be a kind of hint. Sometimes when you reach the location of expected item you might find only a mocking note from the enemy, sometimes the enemy will follow up the lead that you already had plundered.

If option 2 is in play when the time limit comes up it’s time for showdown in a certain place that player might need to research as well. If the player does not show up the victory goes by default to opposing faction.

Negatives are: seems a lot of effort to implement, can be frustrating if enemy that can’t really be interacted with keeps upstaging you.

Disclaimer: the idea is probably the result of always wishing for “A night in lonesome October” videogame.

Jiharo, this is such an interesting idea, thank you! This is EXACTLY the kind of weird or wacky idea I was hoping people would give me – thanks so much. Combined with what another poster wrote about a “rival” I think there is a lot of potential in some of these sorts of ideas; maybe you’re competing with others, or some kind of fame system, or something… yeah, fantastic thoughts, thanks again :).

I remember food back when it was a timer in CoQ. Nice to cap you on planning for long trips, but otherwise just an annoyance until you gathered enough of it around the world. Bit hard to cap the amount of food a player can find in an openish setting. Decent way to limit longer trips, but how fun it is really depends on how well implemented it is.

I remember the tagline of the game being set in a rough Scientific Revolution where one has to put together cultural and archaeological clues. It really brings to mind an idea of an academic rival – competing for university funding, fame, publications, and mummified women.

Bit like a timer, but you don’t necessarily know what he knows, what he knows you know, where he is, and what he has compared to you. And unlike a timer, his progress can be lucky or unlucky. Spend time being faster or razzle him up with subterfuge. Definitely something that can range anywhere from a barebones implementation (a timer with lil pop-up events) to a fully realized rival with finite resources, finite knowledge, and his own physical team.

Klinger, thank you so much for this! I love this rival idea; that’s really, really interesting to me. Or extended more broadly to a number of other rivals, or other factions / NPCs who are also working within this same space… but yeah, I think this is a really interesting and really unusual idea, and one that would fit the setting well. I shall give it more thought… thank you so much!

I think the very best clocks are ones that allow nuanced interplay instead of being hard boundaries. The alarm in Invisible Inc. is probably my favorite example. It does what it needs to do – making every turn precious and forcing you to think which loot is safe, instead of crawling through and getting everything without risk. But the badness of each alarm level is totally set in context. Alarm level 2 is a lot worse if you’re weak on hacking; ignorable if you have a strong setup. Alarm level 3 always spawns a guard – but if you’ve found the spawner, then you’ll know whether it’s going to be right in the thick of things or off in the boonies that you looted turns ago.

To translate that for URR – I think that 4 is a good place to start (the conspiracy is out to get you). But maybe try something like modeling the suspicion meter civilization-by-civilization, with a sort of lead inquisitor assigned to each one. Then you get access to a lot of fiddly bits. Spitballing:

1. You can make it part of the mission to learn the identity of the civilizations conspirator. If you know who, then you can learn which areas they frequent and avoid asking incriminating sounding questions in their patch.

2. The conspirators tools to hamper you involve using influence on local authorities – so areas where people like you are safer. In the vein of “IF you’ve asked too many questions that make it seem you’re on to something, AND the conspirator makes it to a city you’re currently residing in, THEN the city rolls for a game-losing arrest, but that’s modified by your own skills, your local supporters, whether you have a record already, etc.” Ideally this would work out such that your starting civilization is more of “easy mode” because people are more positively disposed to you. (Maybe it’s even in the games fiction that it starts upon you learning who your local conspirator is, and by extension that there’s a conspiracy at all.”

3. Maybe you can have side-quests that have a sort of risk reward where they’ll reduce your local suspicion if you succeed, but represent you dithering away conspiracy clock if you fail. Oh, you have restored holy artifact X to the church of Y, surely you’re an alright person!

4. You could constrain the *verbs of interaction* of conspiracy based on local factors – maybe the way you can lose in a pacifist govt is fundamentally different than what can happen to you in autocracies or etc. Different characters would have incentives to try to go to places that might “get hot” first to look for clues before it gets out of hand.

Etc etc. Of course I have no idea what the backend looks like and level of effort for all of these. But the nice thing about modeling is that you can always start by saying “Fundamentally this is just a straight clock per civilization, but I just named the clock a persons name :D” and then add more bits and bobs as opportunity presents.

Collin, thanks so much for taking the time to write this! I really appreciate the thoughts. Yeah, a lot of the feedback I’ve seen here on the blog, and the other places where I posted this, is an interest in the sort of “conspiracy is out to get you” / “factions becomes hostile” / “you have rivals” models, and I think these are all very very interesting.

1) I LOVE this idea. Seriously. I think this is really, really, really interesting and promising. I’ll have to think some more about implementation.

2) I also like this idea a lot. Like I posted in another thread, maybe there’s a background “hostility” where every single faction and person becomes a TINY bit more hostile every month, say, and so those who you’re friendly with an engaging with you can easy keep ahead of the background “resentment clock” (!) but others will slowly but surely come to like you less…

3) Yes, this one also has potential!

4) Again, I think this is awesome.

Collin, again, thanks so much for this. You’ve REALLY hit the sort of game I’m after with your ideas and there are so many fantastic thoughts here. I shall now go away and think on all of these…

Here’s a thought: no clock. URR is the ultimate sandbox after all, with so much to see and do in a single city, many players might see no need to ever go anywhere else or do anything specific to have fun in the game. So, let them! Let the grand quest be only a pretext. Some players might even decide to go find one or two of the McGuffins in-between faffing around, if they’re not too far out of the way. Who cares. It’s for them.

A very fair point! Alas, it doesn’t really match my vision for the game; I’ve never really been trying to make a “sandbox game”, but rather a specific quest / storyline within a “sandbox world”. Which I know is a very unusual thing! But it’s what excites and interests me, that experience of choosing where to travel next, exploring new spaces, but also having to make strategic choices about where/when you go, your resources, the pursuit of the quest… I can’t rule out a sandbox mode after 1.0, but it’s not my core objective!

I really like the idea others mentioned of a rival or conspirators that act as a clock to try and take you down, especially if they can generate interesting scenarios you can interact with as Collin above mentioned.

I haven’t played any version of URR yet and I’ve only recently started following its development so this may be a bit off-base, but my first thought was money. Assuming the player is working for an organization, that organization gives them some set allowance for everything. If traveling/accommodations/etc. cost money, then the player needs to strategize their trip across the world. Since trading is a thing, perhaps travel and accommodation costs can be much more costly than the amount you’d make from normal trades, but if you find specific artifacts and talk to the people around town you might find a noble of some sort who would pay big money for the artifact. If bribery is a thing in the game, then that can interplay with the money clock as well.

Thanks so much for the comment Hussain! I agree, I think the “factions conspire against you” idea is very fresh and original, and one I’m definitely going to think more about.

Re: money: yes, absolutely! Money is coming in 0.9 (release end of this year) and I definitely intend for managing money to be a major strategic element in the game that determines one’s progress, where you can do, what you can guy, and so forth. This very weekend, in fact, I’ve been developing the functions in the game for calculating prices, exchange rates, giving change, buying/selling, and chartering ships! All most exciting :).

I share my namesake’s fear that you will ruin the joy of sandbox exploration by putting the player under pressure, but I also like strategy games, for all that.

I could be wrong, forgive me for not paying attention, but I don’t think you’ve implemented any sort of player power/experience stats yet, or equipment or resource stockpiling, so what’s to grind? Therefore, you don’t need a clock yet and it’s a solution looking for a problem.

Besides, I like the mechanism of getting-yourself-into-trouble as a way of inciting the player to use strategy. This is found in Dwarf Fortress and in Angband. In short, if you venture over THERE, you’ll be attacked, you’ll be in a series of unfortunate crises, and you’ll have to call on all kinds of ad-hoc resources and cunning ruses to get out again. The thing is, you want to venture over THERE because you’re human and greedy and you like treasure. Players don’t have to be forced to do this.

So Angband actually has no food clock in the overall game – you can potter around at dungeon level one for as long as you like, regenerating the level and finding more food and spawning more incredibly easy monsters which barely advance your experience points at all. Except you won’t do that, because you’re an impatient human with a limited real-world lifespan, and an ego, and the desire to explore. Then before you know it you’re at level 1500, trying to get artifacts from the clutches of high-level unique monsters, and having to deal with being confused or scared or blinded, and acid attacks have degraded your AC and something with fire breath has just destroyed your Word of Recall scroll, so you’re stuck down THERE.

Technically one of the risks at that point is starving, but it’s way down the list. You have more pressing concerns about running out of scrolls that blink you out of trouble or potions that fix your conditions. Like I say, there’s no main clock in the game, and no need for one: strategy comes about as a result of being tempted to venture into a dangerous place, and the monsters then attempt to ruin every kind of ability the player has in multiple, demoralising ways, creating the urgent need to fix multiple problems and escape the level – whereupon the pressure is off again, but did you get the treasure you came for?

I mention DF because of a fascinating variation: leaving aside the dangers of deep mining, even if you stay near the surface and craft a lot of material wealth, you will as a result increase the size and danger of the regular goblin attacks. (I suppose they have heard of your beautiful wall-carvings, and ornamental garnet-studded furniture, and got jealous.) The point being, again, that it’s player-determined pressure. You get yourself into trouble voluntarily because you’re greedy and curious.

This is great for those like my namesake who would prefer to potter around doing humble tourist stuff, because no challenge will be triggered that way.

For contrast, Brogue definitely has a food clock, which means that you have move quickly through the levels, strategically leaving things behind and not backtracking much. The original Rogue also had one, although I think it was important to kill everything on every level for the experience points – the food clock just created pressure to be a more efficient perfectionist.

I really don’t think this mechanism suits your distinctly open-world game, which is presumably not supposed to be a linear race.

What you should do is investigate and implement forms of danger, ways to slowly degrade the players power and ability and create a sense of threat, attritional loss and doom: see what kind of strategies emerge from these situations, and then relegate all that to isolated little parts of the game – buildings, maybe – which the player is not under any time pressure to get involved with, and need not enter into at all, except in order to gain power and to win. It would be like a wide world to explore freely, dotted with tempting tar pits.

Thanks OF! So, the goal has always been to make a vast world but one with a clear quest, where a large part of what you’re doing is making choices about where to visit and when to visit and so forth. I’ve always been captivated by games that give a sense of a “larger world” and I want to evoke that here; I think it makes exploring that world much more interesting and…. grounded, or consequential?, than having completely free movement.

Your clock/mechanics point is interesting! I’m starting to work on these now because I want to start getting a sense of what moving around the game world will be like as that will help me structure the clues in the game world, and ALSO to add gameplay mechanics people can be playing with and exploring (and sending me feedback on!) in the interim as well. I really appreciate what you wrote about both DF and Angband, these are interesting models and will definitely facot rinto my thinking about these questions going forward. And you’re right, I’m not looking for a *linear* race but I’m definitely looking for something with drive and direction, albeit with that drive and direction pushing you around a vast open world. Again, thank you SO much for these thoughts!

You are a renown relic hunter framed for the theft of an ancient artifact belonging to a powerful organization/person and are sentenced to death. While awaiting execution in the capital city’s prisons, you are met by a mysterious figure (maybe the person who framed you in order to force you into taking the job??) and offered a deal. Retrieve all 9 artifacts and your (alleged) crimes will be forgotten and you will be pardonend. After accepting the deal, you are given a hint (map, riddle, special key, etc.) to the first and nearest of the nine artifacts and told where to return it once it has been acquired (maybe a random location in the city nearest to the artifact?).

You will then have a year and a day to retrieve the artifact and return it. Finding and returning the artifact will result in you recieving a small reward (gold, magic item, horse, food, etc.), being given the next clue, and having your life extended by another year and a day. If you fail, the mysterious figure will send agents out to recover you and you will be returned and executed. You can always try to evade them (disguise or maybe fleeing to mountain region?) or kill them (if you can manage it…). If you are caught after you have retrieved the artifact, then the agents will escort you back to the mysterious figure. You will be given a warning (threat) and you will not be given a reward, but you will be allowed to continue. Once all artifacts are recovered, the player is officially pardoned and the game ends (but they can continue playing as a sandbox world with the friends and enemies they made?).

The locations of the artifacts are given to you in order of difficulty but you can still retrieve them in any order (if you hear rumors or uncover their locations yourself). Your reward for returning the artifacts will scale since the figure can trust you a bit more with each successful recovery and you prove that you are capable. Maybe fhe mysterious figure posted bounties on all of the artifacts, so there are opportunists searching for the artifacts and you may come across them from time-to-time…

I think food, drink, and sleep should provide the player with buffs depending upon the quality/tier (like Valheim) and only provide very small debuffs for not doing it. That way, the game won’t feel tedious since you won’t *have* to do it but you will *want* to do it. Cheap and easy-to-make food/drinks will provide less stat buffs as expensive and hard-to-prepare food/drinks. Also, potions/drugs can be used as temporary solutions but will cause side-effects…

These are great thoughts Taylor, thank you so much for taking the time! Your first paragraph is reasonably close to what I indeed have in mind – I had originally been thinking you’d always start from a relatively wealthy family, as this is a game with mechanics around trade, time, travel, money, etc, but maybe a difficulty setting could choose where you start and your starting resources and so forth, I’ll have to give that some thought. I also like the possibility of various resources giving you various bonuses and the like, I’m starting to think about that now. I also definitely agree that systems around trust and how much various factions like you and so on really ties in well with the game. The feedback I’ve got here and elsewhere has been massively helpful and I’m already developing a few ideas which I think have some solid potential. Thanks again so much for taking the time!

No problem! I just wanted to throw out an idea that may serve as inspiration.

Personally, I would add two separate game modes. A story mode that has tight contraints and a clear objective to achieve and a sandbox mode with no real objective (besides not dying). In either mode the player can customize their starting location, money, items, faction, attributes/abilities, etc. and have a personalized character that matches the way they intended to play the game (starting as a beggar, librarian, mercenary, merchant, lord/lady, etc.). This way, players can set the difficulty of the game themselves by picking different starting scenarios (like Dwarf Fortress). Also, if there is a leaderboard then you can just adjust the player’s score in the story mode based upon the options they choose when starting (like ADOM).

Thanks Taylor, two game modes is definitely a possibility!

As someone who perenially struggles with managing time in “long fixed unmoveable time” I dont have a great deal to add except that I agree that whatever the “clock” is, it needs to be responsive/malleable in some way. Not just “5 years to doom” sort of thing.

I also think that the idea of a clock that’s based on *you* interesting (AKA the limit might be conspirators or rival hunters trying to catch up with you/ sabotage you in some way), but could also be paired with a more “global” timer – one that if left to its own devices will continue to advance to an unfavorable end state. Perhaps the return of that old idea about a “Grand conspiracy” you’re trying to stop, ya know?

And finally, while it’s firmly out of scope for this update, I think a “conspiciousness” level tracked by locale would be a very neat tie in to any kind of “targeted” clock. After all, some strange and rude foreigner in strange garb poking his nose around an abbey in the capital and throwing money all over ought to bring down the undoubtedly-shadowy forces conspiring to stop your mission more than that same foreigner who’s paid enough attention to your lovingly-generated world to fit in and wear local garb / not ask strange questions in large cities etc.

Love that little clock graphic too!

Yes, definitely! I’d had an “awareness” thing planned for a while, but I think there’s a lot of potential in the idea of factions, nations, religions, people, whatever, gradually becoming more hostile with time and so forth, and having ways to mitigate these. Glad you like the pocket watch – those are now in-game trade items!

Along the lines of the other “rivals” comments, but what if *all* the factions were looking for these items? Your conversations with factions could convince them you’re working for or against them. You could also leave items with these factions to gain a stronger alliance, and have to work later to get them back. The relationships all degrade over time, and you never have enough to keep them all happy, so some will be displeased with your progress while others will be downright hostile.

Thanks Rhile! That is also a REALLY interesting idea, I like that a lot! Yes, maybe everyone is seeking them out… yeah, that has a lot of potential. Thank you for suggesting it!

Almost all (if not actually all) of the modern major RLs (UnReal World, DF adventure mode, Caves of Qud, Cataclysm: DDA) have a food clock that is primarily linked to survival – there are quests in these games, but there is no main quest or overarching plot, like a winning condition of the upcoming URR, so these games challenge the player with the task of keeping alive for as long as possible, and their food mechanics is a part of that challenge. So if you want to make the “food clock” actually the food clock, then you should implement it in a game-like unrealistic way, not in this life simulating realistic way, like it has been mentioned in a comment above – instead of refilling the hunger and thirst meters, certain foods and drinks will give the player some buffs/debuffs that may be utilized strategically. Like consuming an alcoholic beverage will buff the player’s strength and debuff his/hers intelligence/intellect for a day, or will help in a conversation in an inn. If you are planning to make class/profession thing that you had in early URR version (I remember there used to be things like librarian and demonologist or something like that) then maybe the same consumable items will have different effects on players with different character setups, like librarians will never get as much strength from taking a pint of beer compared to a warrior. Buuuut maybe it’s not that fun anyway. I think having the main quest puts URR closer to traditional quest adventure games, like Death Gate or King’s Quest, so things like consuming food for elivating hunger and sleeping to get more energy will kinda make the game less game-y and closer to a life simulation, which URR is not trying to be. But you kinda still need some clock for the player, especially if it would be possible to complete the game without any combat. No combat means no grinding, and no grinding means no need for a traditional food clock, but then what should be the losing conditions for the stealth run, rigth? Something has to be a threat for the player, but maybe not stictly linked to time. This is why the ideas mentioned in the comments above of a rivaling party on a same quest, or different factions, or financial restraints, or assassins trying to stop you as you get closer to you final goal, become very interesting. Money should definitely play a bigger role as a challenge for the player in URR instead of the physiological needs. I guess you will have to try out a few different approaches to see which fits best your idea for the game you have in mind.

Oh, yeah, I’m not looking to make a survival game at all, but I do want something where your movement choices and money-spending choices are strategic and thoughtful. That’s the kind of balance we’re going for (so yes, definitely not meticulous detailed survival-sim style stuff). I really appreciate these thoughts Pavel! Yeah, I’m increasingly leaning in the money direction as well. As I code 0.9 I’m really excited by some of the money dynamics I’m putting in here, it feels very different from any game I’ve seen, honestly, and I think expanding this further has lots of potential…

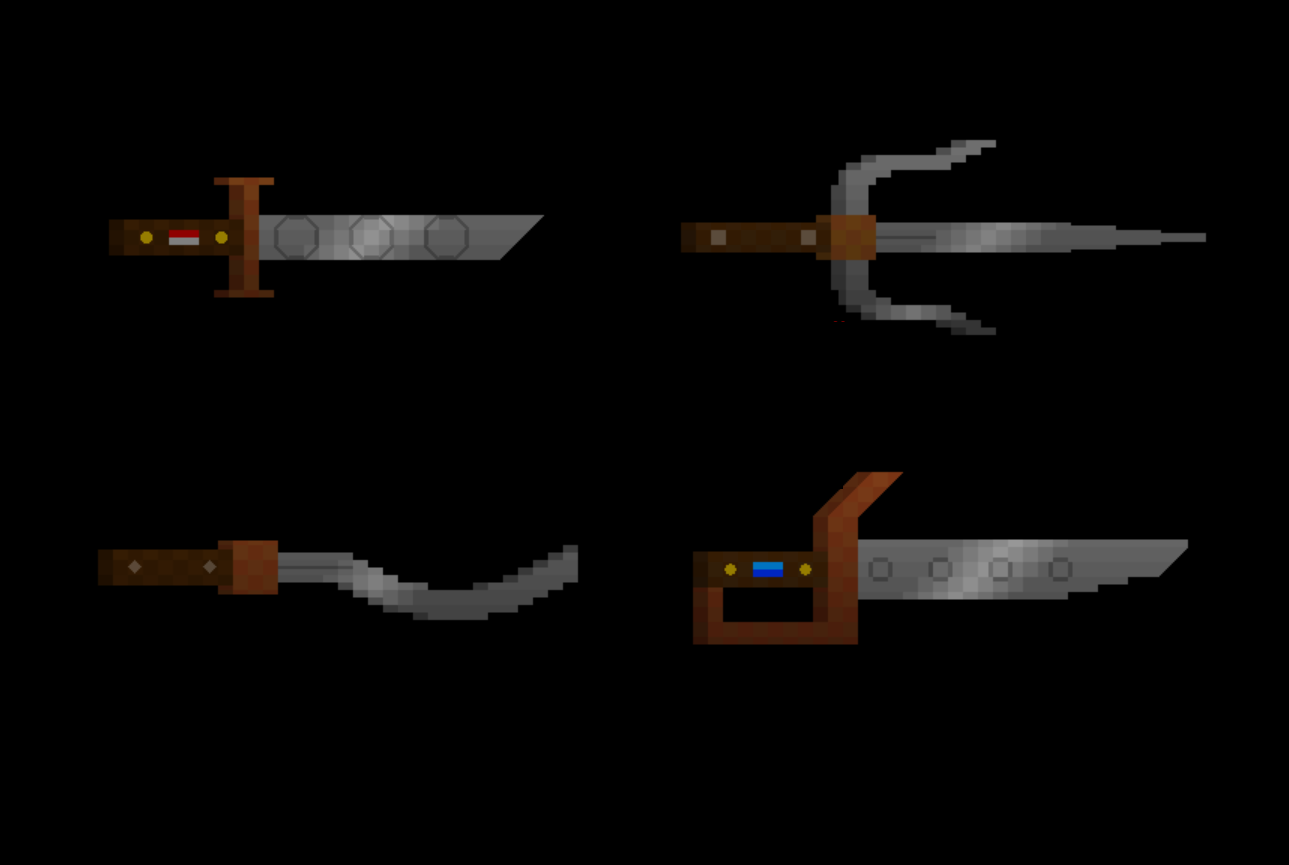

Your progress is astonishing and very inspirational! I’ve seen the new generators for amrour and board games you shared on FB and Twitter the other day, that was totally unexpected and looks absolutely incredible. I guess if you are going to make a traditional foods and dishes generators later it would be only expected to add some sort of food consuming mechanics, but again, yes, in a not life simulating manner.

On the topic of money – if you haven’t done so already, I’d like to throw an idea at you of adding different currency division types, as in not only decimal, but also duodecimal, hexadecimal, sexagesimal, and maybe some other exotic systems, all of which actually have been used for counting currency at some point throughout history. Maybe even something as crazy as money system in Britain before 1971 or the fictional one introduced in Harry Potter. And maybe even to apply the same counting system nationwise on anything, including time measurement, like having 13 months in a year, or 10 hours in a day, depending on a nation. But that would make it hard to monitor time for the player, because there already is a standard callendar and time measurement in URR, so idk, just an idea that seemed cool when I started writing this)

Thanks Pavel! You’re very kind, and I’m so glad you like them. See my reply to crowbar re: food; I think just some very basic resources that you pay for in order to travel around the map safely. Also, yes, different currencies do have different divisors! Right now I think the options are 4/6/8/10/12 “small” coins go into one big one, but I’m sure I’ll add other ones later one. Re: calendars, HA, yes, I do intend to generate calendars later on! Great minds think alike 🙂

I like the idea of either a generous fixed clock (which is like Fallout 1) with variable starting difficulty levels, or the extending clock. If you do go with an extending clock, I would suggest making the first countdown the most generous, then as you accomplish objectives the clock extends in smaller fixed intervals.

Other thoughts:

-A way for the player to extend the clock in ways beyond accomplishing the main objectives (i.e., finding the treasures). For example, doing something to disrupt the conspiracy by killing key leaders, stealing items, planting evidence or counter-propaganda, exposing their misdeeds to the public, etc.

-A hunger mechanic is not incompatible with a world-countdown clock, but as URR doesn’t seem to be intended to be a hardcore survival sim, if you do implement hunger/thirst/etc I suggest not make it too punishing.

-Another example of a countdown clock would be if the player has some sort of condition or disease that will eventually kill them (and may gradually inflict harm or degradation) unless they find treatment and an eventual cure. This is used for part of the main quest in Morrowind.

-Cultist Simulator uses countdowns in the form of menace qualities that gradually accumulate and need to be mitigated; your character may go insane, or get arrested, or die of illnesses unless you are able to reduce the infliction. As the player in URR gradually uncovers the conspiracy, they might get a sort of menace countdown in the form of notoriety and be hounded by the constables and eventually be overwhelmed (this is also similar to the star ratings in GTA).

Thanks for these thoughts crowbar!

1) Yeah, I increasingly like this idea; you can push the timer back, and so what you’re aiming to do is to make good strategic choices to advance the quest and hence give yourself more resources (time, money, whatever) with which to keep going.

2) Oh, absolutely. I’m currently working on a 3-part idea; a food resource you need everywhere in various amounts, a water resource you need everywhere in low amounts and in desert in high amounts, and a “supplies” resource you need everywhere in small amounts, and in mountains / polar regions in high amounts. Some light strategy but nothing else, and will integrate nicely with money and travel mechanics.

3) An interesting one! I don’t think the illness model fits here, though the player can of course die of old age…

4) Yeah, these are cool, I think this kind of multiple timer is definitely promising!

Multiple clocks feels promising for such a generative environment. Tracking ambient secrecy / research capacity / intervention capacity (all reduced over time) could be more surprising + fun than literal food/water/supplies

One variation on ideas so far:

~ There is a conspiracy [to rewrite history], [under cover of war].

~ You learn of it while [incapacitated / written off / your survival or knowledge of it is hidden]

World sequences:

1. cabal: they need to finalize their plans -> implement them -> cover it up. The longer they take, the harder it is to cover it up.

2. protagonal: you need to [recover], investigate + confirm the consp (w/o tipping them off), change the course of events. The slower you are, the less you can influence events. The faster you move, the less you recover.

3. global: everything evolves: people die and are replaced, plans change, facts grow stale. wait forever and an entirely different upheaval could alter world affairs.

Interlaced clocks:

#Central clock: Intervention. stopping the cover-up and war. Move fast and stop the war [or delay it or redirect it to your own ends?]; slower and at least stop [or alter] the rewriting; too slow and you can only prove part of the conspiracy, and record a competing alternative history for future generations to puzzle over.

#2nd clock: [per artefact/region] Secrecy. confirmation of information –> finding artefacts before the info gets stale. wait too long / be too indiscriminate in your search and you are discovered / discredited / a fake conspiracy is pinned on you, or artefacts are moved.

#3rd clock: Capacity. your capacity grows at first (as you [recover] / gain allies + tools), then is limited (by opposition / injury / avenues being blocked). you have to execute plans while they are still possible. some research can be done remotely, but there’s roundtrip communication time for each step, so you need to visit in person for detailed work.

In the spirit of the Daughter of Time, perfect capacity might be possible while sitting in your bed; leaving only surgical site visits to be done. Perfect secrecy gives more time per region. Perfect intervention makes more time for the other two [maybe various ‘draws’ are possible: pass the buck to the next gen on both sides; screw up possibility of both successful conspiring and unmasking; &c]. But in general all three provide urgency.

Hi SJK, there are awesome ideas! I do like a lot of these possibilities, a lot. The more I think about it the more I do agree with your first comment in particular, that multiple clocks in this kind of environment definitely makes the most sense. Your final paragraph as well as really interesting; I wonder whether I could have these various clocks and, as you say, emphasizing one particular clock maybe gives you special or unique advantages while the other clocks spin on and get harder and harder for the player to navigate. In terms of letting the player pursue many different strategies, I do like that idea a lot. Thank you again for such a detailed comment, there’s a lot of ideas here I will genuinely be thinking about in the coming year or two as development resumes (and hopefully, this time, doesn’t grind back to a halt again!).